1. 環境は、Window 10 Home (64bit) 上で行った。

2. Anaconda3 (64bit) – Spyder上で、動作確認を行った。

3. python の バージョンは、python 3.6.5 である。

4. pytorch の バージョンは、pytorch 0.4.1 である。

5. GPU は, NVIDIA社 の GeForce GTX 1050 である。

6. CPU は, Intel社 の Core(TM) i7-7700HQ である。

今回確認した内容は、現場で使える! PyTorch開発入門 深層学習モデルの作成とアプリケーションへの実装 (AI & TECHNOLOGY) の 4.2.2 CNNの構築と学習(P.067 – P.071) である。

前回に引き続き, Fashion-MNIST を 使った, CNN の 画像分類 について, 少し動作確認を行った.

前回の課題として, 書籍上には, 正誤判定の結果に関する, 具体的な情報が未記載だったので, 今回は, 実際に, 画像を出力させるようにプログラムを書き換えて確認することになった.

※プログラムの詳細は、書籍を参考(P.067 – P.071)にして下さい。

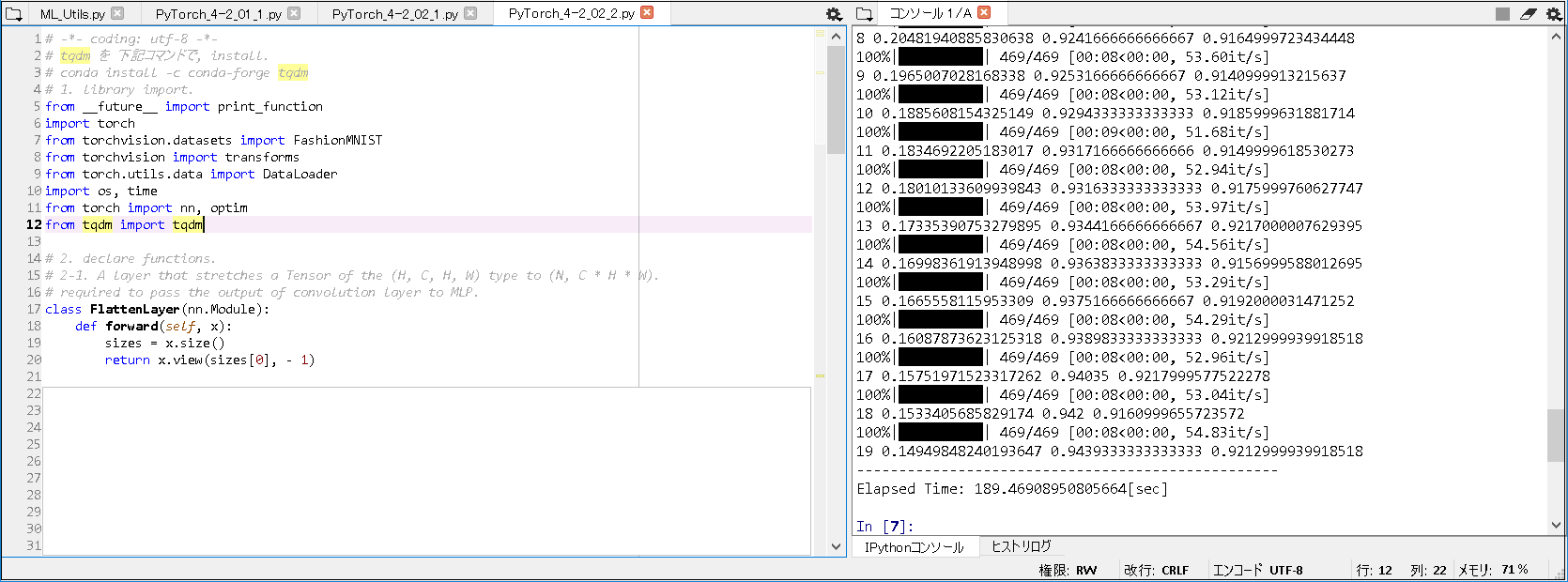

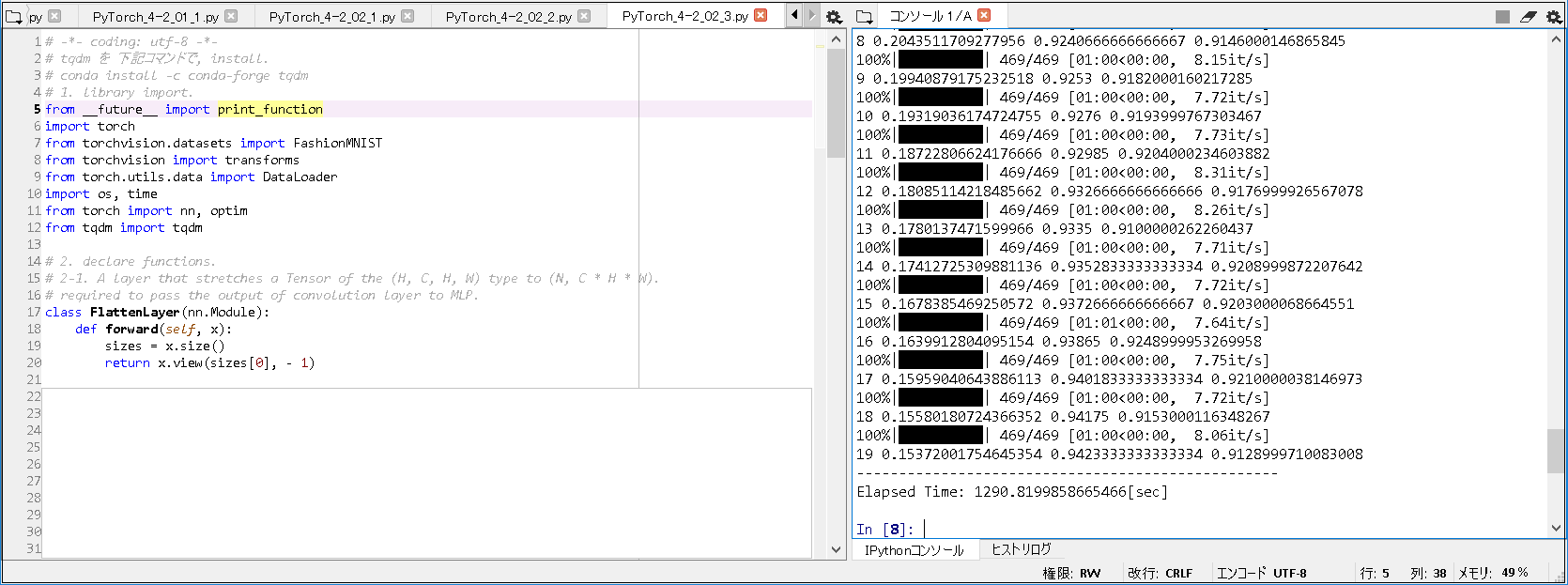

■Fashion-MNISTの学習(正誤判定の画像出力版).

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 |

# -*- coding: utf-8 -*- # tqdm を 下記コマンドで, install. # conda install -c conda-forge tqdm # 1. library import. from __future__ import print_function import torch import torchvision from torchvision.datasets import FashionMNIST from torchvision import transforms from torch.utils.data import DataLoader import os, time from torch import nn, optim from tqdm import tqdm import numpy as np import matplotlib.pyplot as plt # 2. declare functions. # 2-1. display image function. def imshow(inp, title=None): """Imshow for Tensor.""" inp = inp.numpy().transpose((1, 2, 0)) mean = np.array([0.485, 0.456, 0.406]) std = np.array([0.229, 0.224, 0.225]) inp = std * inp + mean inp = np.clip(inp, 0, 1) plt.axis('off') plt.imshow(inp) if title is not None: plt.title(title) # plt.pause(0.001) # pause a bit so that plots are updated # 2-2. A layer that stretches a Tensor of the (H, C, H, W) type to (N, C * H * W). # required to pass the output of convolution layer to MLP. class FlattenLayer(nn.Module): def forward(self, x): sizes = x.size() return x.view(sizes[0], - 1) ~(略)~ # 2-4. eval helper function. def eval_net(net, data_loader, device="cpu"): # invalidate Dropout and BatchNorm. ~(略)~ # a = [1, 2, 3, 4, 5] # b = [1, 3, 4, 3, 5] # c = [i for i, j in zip(a, b) if i == j] # print(c) # [1, 5] # [index, i, j] は, 誤検知画像のindex, 正解ラベル, 予測ラベル の意味. correct, wrong = [], [] for index, (i, j) in enumerate(zip(ys, ypreds)): if i == j: correct.append([index, i, j]) else: wrong.append([index, i, j]) return acc.item(), correct, wrong ~(略)~ # 2-5. train net helper function. def train_net(net, train_loader, test_loader, optimizer_cls=optim.Adam, loss_fn=nn.CrossEntropyLoss(), n_iter=10, device="cpu"): train_losses, train_acc, val_acc = [], [], [] optimizer = optimizer_cls(net.parameters()) correct_list, wrong_list = [], [] ~(略)~ e = eval_net(net, test_loader, device) val_acc.append(e[0]) if epoch + 1 == n_iter: correct_list.append(e[1]) wrong_list.append(e[2]) print(epoch, train_losses[-1], train_acc[-1], val_acc[-1], flush=True) return correct_list, wrong_list ~(略)~ # 5. execute training. net.to("cuda:0") correct_list, wrong_list = train_net(net, train_loader, test_loader, n_iter=1, device="cuda:0") # 6. display processing time. end = time.time() # images: torch.Size([128, 1, 28, 28]) # images, labels: <class 'torch.Tensor'> # images[0]: torch.Size([1, 28, 28]) images, labels = next(iter(test_loader)) # print(images.size()) # check the data size. print('--- display 9 wrong answers ------------------------------------------') # wrong_list[0][0:9][0]: <class 'list'>, wrong_list[0][0:9][0][1]: <class 'torch.Tensor'> print(wrong_list[0][0:9]) indexes_for_wrong_list_image = [x[0] for x in wrong_list[0][0:9]] print(str(indexes_for_wrong_list_image)) # ex. [17, 23, 40, 42, 46, 49, 68, 74, 98] wrong_images_list = [] for i, v in enumerate(images): if i in indexes_for_wrong_list_image: # print(i) # 検証用画像 の サイズ(28 × 28) # v[0].size(): torch.Size([28, 28]) # print(v[0].size()) # Adding a dimension to a tensor in PyTorch. # http://blog.outcome.io/adding-a-dimension-to-a-tensor-in-pytorch/ # uv: torch.Size([28, 28]) -> torch.Size([1, 28, 28]) uv = v[0][None, :, :] wrong_images_list.append(uv) # How to turn a list of tensor to tensor? # https://discuss.pytorch.org/t/how-to-turn-a-list-of-tensor-to-tensor/8868/4 # -> convert list to torch.Tensor by torch.stack. wrong_images_list = torch.stack(wrong_images_list) print(wrong_images_list.size()) # torch.Size([9, 1, 28, 28]) wrong_images = torchvision.utils.make_grid(wrong_images_list, nrow=3, padding=1) plt.subplot(121) plt.title('wrong') imshow(wrong_images) print('--- display 9 correct answers ----------------------------------------') print(correct_list[0][0:9]) indexes_for_correct_list_image = [x[0] for x in correct_list[0][0:9]] print(str(indexes_for_correct_list_image)) # ex. [0, 1, 2, 3, 4, 5, 6, 7, 8] correct_images_list = [] for i, v in enumerate(images): if i in indexes_for_correct_list_image: uv = v[0][None, :, :] correct_images_list.append(uv) correct_images_list = torch.stack(correct_images_list) # print(correct_images_list.size()) correct_images = torchvision.utils.make_grid(correct_images_list, nrow=3, padding=1) plt.subplot(122) plt.title('correct') imshow(correct_images) print('--------------------------------------------------') print('Elapsed Time: ' + str(end - start) + "[sec]") |

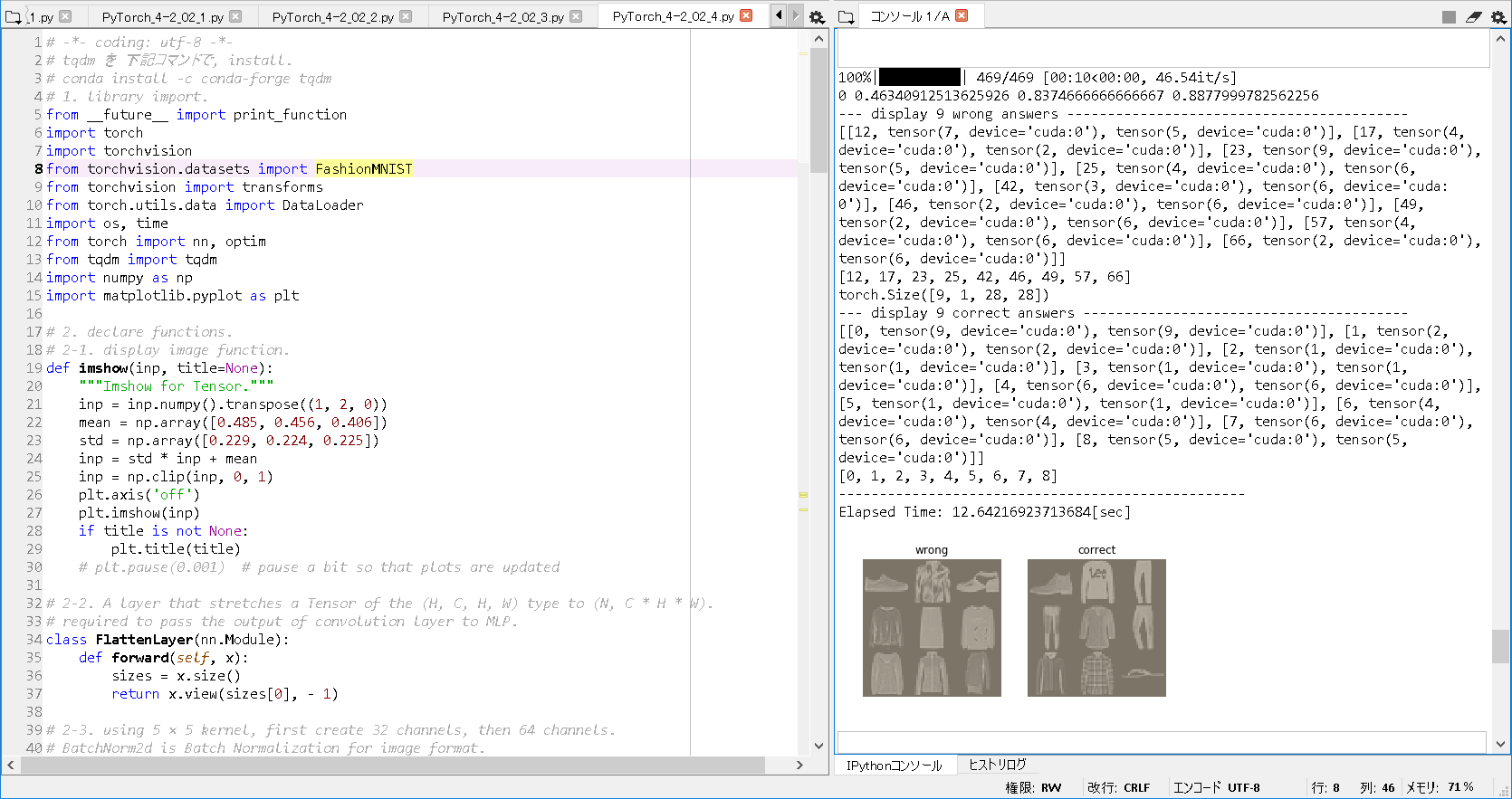

■実行結果(epoch = 1).

|

1 2 3 4 5 6 7 8 9 10 11 |

100%|██████████| 469/469 [00:10<00:00, 46.54it/s] 0 0.46340912513625926 0.8374666666666667 0.8877999782562256 --- display 9 wrong answers ------------------------------------------ [[12, tensor(7, device='cuda:0'), tensor(5, device='cuda:0')], [17, tensor(4, device='cuda:0'), tensor(2, device='cuda:0')], [23, tensor(9, device='cuda:0'), tensor(5, device='cuda:0')], [25, tensor(4, device='cuda:0'), tensor(6, device='cuda:0')], [42, tensor(3, device='cuda:0'), tensor(6, device='cuda:0')], [46, tensor(2, device='cuda:0'), tensor(6, device='cuda:0')], [49, tensor(2, device='cuda:0'), tensor(6, device='cuda:0')], [57, tensor(4, device='cuda:0'), tensor(6, device='cuda:0')], [66, tensor(2, device='cuda:0'), tensor(6, device='cuda:0')]] [12, 17, 23, 25, 42, 46, 49, 57, 66] torch.Size([9, 1, 28, 28]) --- display 9 correct answers ---------------------------------------- [[0, tensor(9, device='cuda:0'), tensor(9, device='cuda:0')], [1, tensor(2, device='cuda:0'), tensor(2, device='cuda:0')], [2, tensor(1, device='cuda:0'), tensor(1, device='cuda:0')], [3, tensor(1, device='cuda:0'), tensor(1, device='cuda:0')], [4, tensor(6, device='cuda:0'), tensor(6, device='cuda:0')], [5, tensor(1, device='cuda:0'), tensor(1, device='cuda:0')], [6, tensor(4, device='cuda:0'), tensor(4, device='cuda:0')], [7, tensor(6, device='cuda:0'), tensor(6, device='cuda:0')], [8, tensor(5, device='cuda:0'), tensor(5, device='cuda:0')]] [0, 1, 2, 3, 4, 5, 6, 7, 8] -------------------------------------------------- Elapsed Time: 12.64216923713684[sec] |

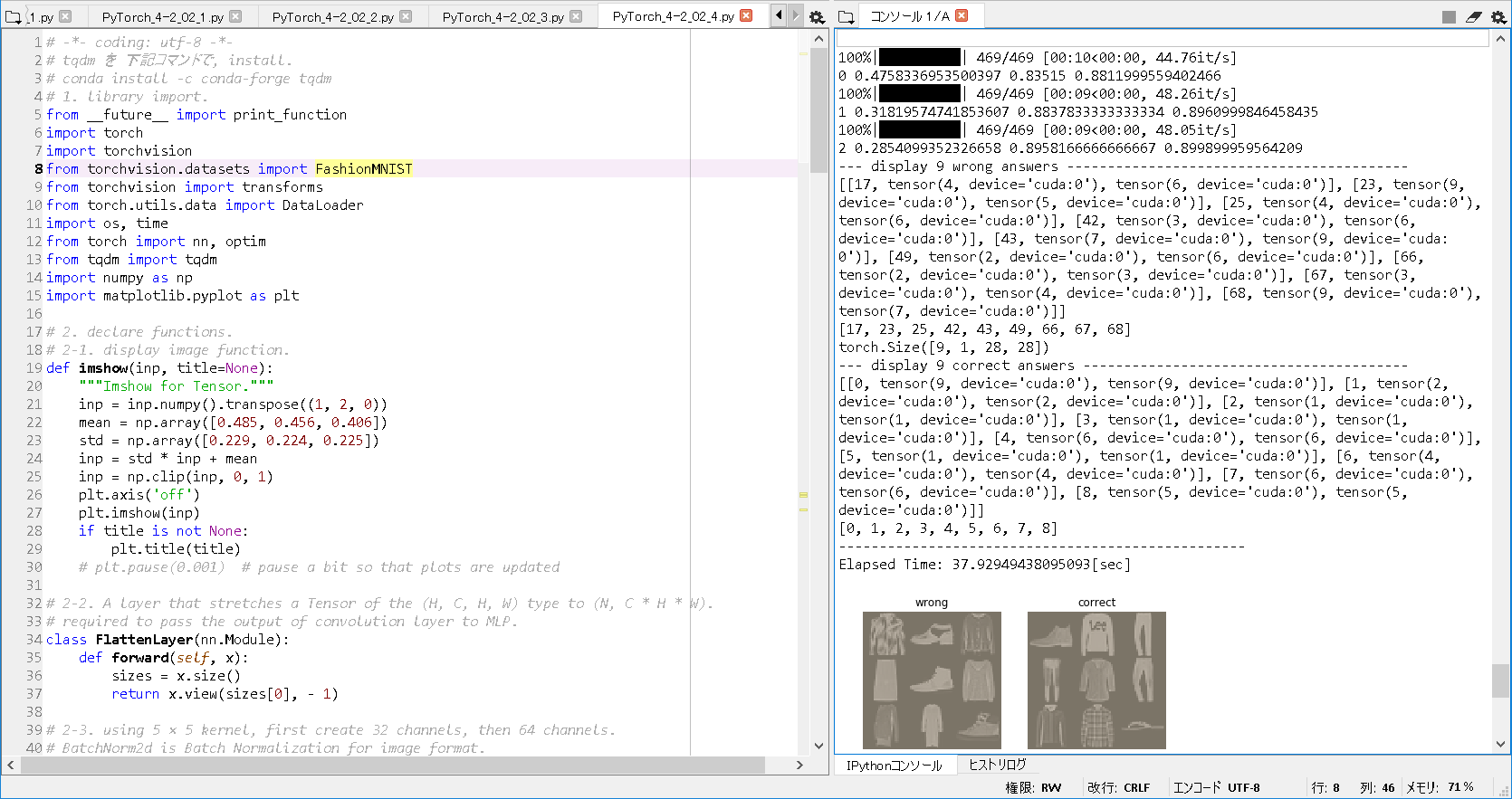

■実行結果(epoch = 3).

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

100%|██████████| 469/469 [00:10<00:00, 44.76it/s] 0 0.4758336953500397 0.83515 0.8811999559402466 100%|██████████| 469/469 [00:09<00:00, 48.26it/s] 1 0.31819574741853607 0.8837833333333334 0.8960999846458435 100%|██████████| 469/469 [00:09<00:00, 48.05it/s] 2 0.2854099352326658 0.8958166666666667 0.899899959564209 --- display 9 wrong answers ------------------------------------------ [[17, tensor(4, device='cuda:0'), tensor(6, device='cuda:0')], [23, tensor(9, device='cuda:0'), tensor(5, device='cuda:0')], [25, tensor(4, device='cuda:0'), tensor(6, device='cuda:0')], [42, tensor(3, device='cuda:0'), tensor(6, device='cuda:0')], [43, tensor(7, device='cuda:0'), tensor(9, device='cuda:0')], [49, tensor(2, device='cuda:0'), tensor(6, device='cuda:0')], [66, tensor(2, device='cuda:0'), tensor(3, device='cuda:0')], [67, tensor(3, device='cuda:0'), tensor(4, device='cuda:0')], [68, tensor(9, device='cuda:0'), tensor(7, device='cuda:0')]] [17, 23, 25, 42, 43, 49, 66, 67, 68] torch.Size([9, 1, 28, 28]) --- display 9 correct answers ---------------------------------------- [[0, tensor(9, device='cuda:0'), tensor(9, device='cuda:0')], [1, tensor(2, device='cuda:0'), tensor(2, device='cuda:0')], [2, tensor(1, device='cuda:0'), tensor(1, device='cuda:0')], [3, tensor(1, device='cuda:0'), tensor(1, device='cuda:0')], [4, tensor(6, device='cuda:0'), tensor(6, device='cuda:0')], [5, tensor(1, device='cuda:0'), tensor(1, device='cuda:0')], [6, tensor(4, device='cuda:0'), tensor(4, device='cuda:0')], [7, tensor(6, device='cuda:0'), tensor(6, device='cuda:0')], [8, tensor(5, device='cuda:0'), tensor(5, device='cuda:0')]] [0, 1, 2, 3, 4, 5, 6, 7, 8] -------------------------------------------------- Elapsed Time: 37.92949438095093[sec] |

■以上の実行結果から, 以下のことが分かった.

① 例えば, epoch = 1 の 場合, Fashion-MNIST の テスト画像 の (index的に)12番目 について,

“Sandal” と 予想(tensor(5, device=’cuda:0′)) したが, “Sneaker” が 正解(tensor(7, device=’cuda:0′)) との情報が得られた.

② また, 例えば, epoch = 3 の 場合, Fashion-MNIST の テスト画像 の (index的に)25番目 について,

“Shirt” と 予想(tensor(6, device=’cuda:0′)) したが, “Coat” が 正解(tensor(4, device=’cuda:0′)) との情報が得られた.

③ なお, epoch = 1, 3 のいずれも, (index的に)0~8番目 について, 予想 と 正解 が 一致しているとの情報が得られた.

■参照サイト

Adding a dimension to a tensor in PyTorch.

How to turn a list of tensor to tensor?

Fashion-MNIST

■参考書籍

現場で使える! PyTorch開発入門 深層学習モデルの作成とアプリケーションへの実装 (AI & TECHNOLOGY)